Last week I approved a PR that should not have shipped. The code was clean, all unit tests passed and the agent that wrote it had followed every convention in our CLAUDE.md. But the feature it built was completely wrong. It implemented exactly what the ticket said but not what Product actually wanted.

A year ago, story refinement would have caught this. We stopped doing it when we decided ceremonies were bad.

You already knew this

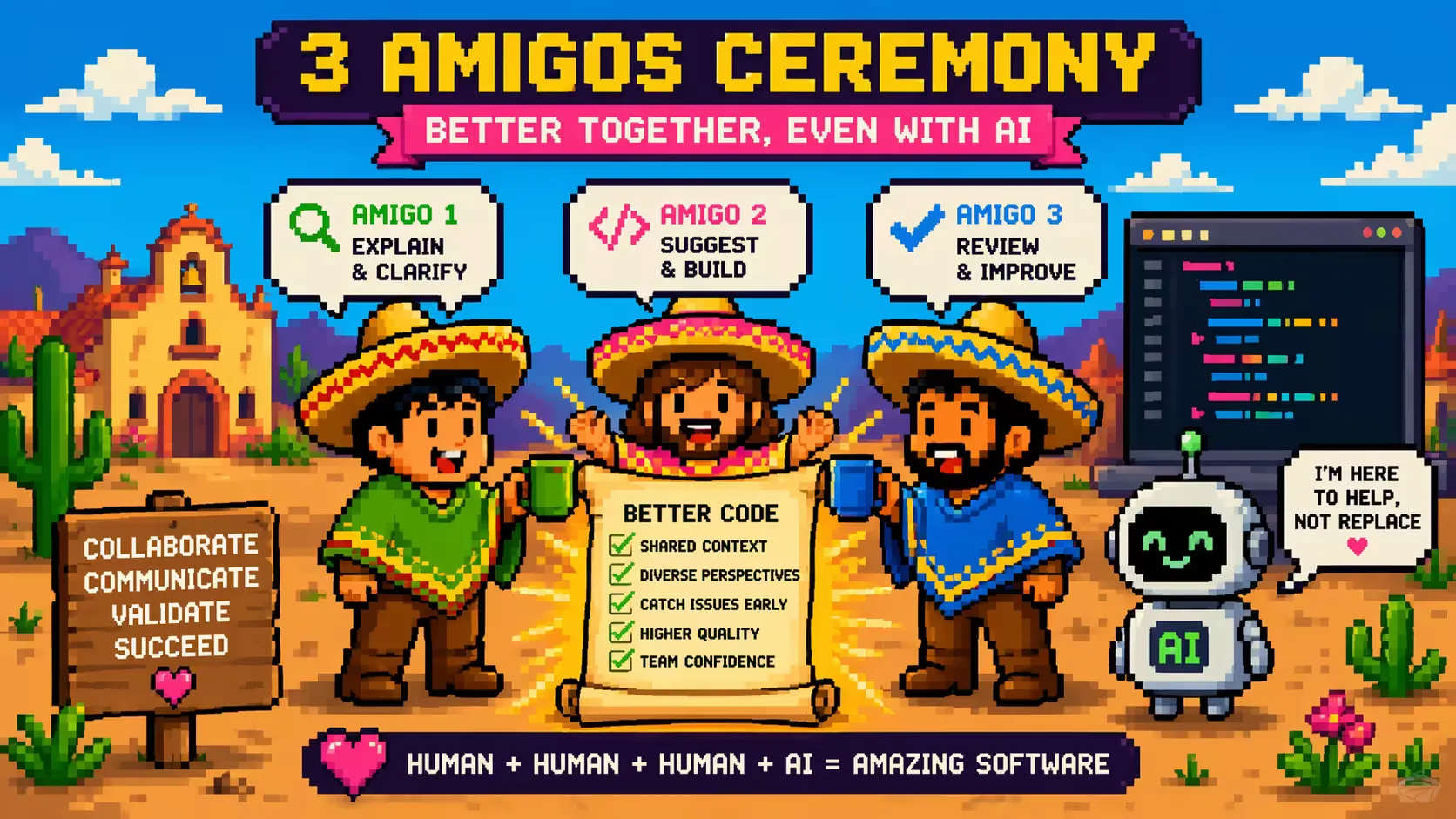

The Three Amigos comes from around 2009, part of the BDD movement. Before you build a story, put three people in a room to refine a story: a business person who owns “why”, a developer who owns the implementation, a tester who owns the quality. Work through examples, argue about acceptance criteria, leave with alignment.

It worked because the three roles catch different things. Product mostly thinks about happy paths, Developers forget business value and Testers forget scope.

Then agile got heavy (SAFE!), the standups became status reports, refinement became a two hour marathon. The three amigos looked like one more meeting and people stopped showing up.

The human 3 amigos

AI first engineering has brought it back, just calling it something else.

Business/Product: owns intent

Defines the why, the acceptance criteria and the customer context the agent will never have access to. This is the quality gate all the way to the left. When it’s weak, every downstream check is just confirming that plausible code does plausible things.

Development: directs the agents

Breaks the problem down into actionable prompts and picks the architecture and design patterns. Writes the prompt and analyses the plan. The shift from implementer to orchestrator I wrote about in Part 2 lives here.

Testing: guards coherence

Watches for architectural cohesion and security issues. Highlights things that seem correct in isolation but are wrong in production context. This role has to be explicit, if nobody owns it, it defaults to the coding agent and that’s where the problems from Part 4 come from.

You need all three roles, however one person can wear two hats. What you can’t do is just have an engineer with their coding agent and call that a team.

The Amazon March 2026 incident I covered in Part 3 landed on the same answer from the other direction. Amazon’s SVP of engineering mandated senior sign off on AI generated code after an outage forced the issue. That’s the reviewer role being enforced at policy level rather than by habit.

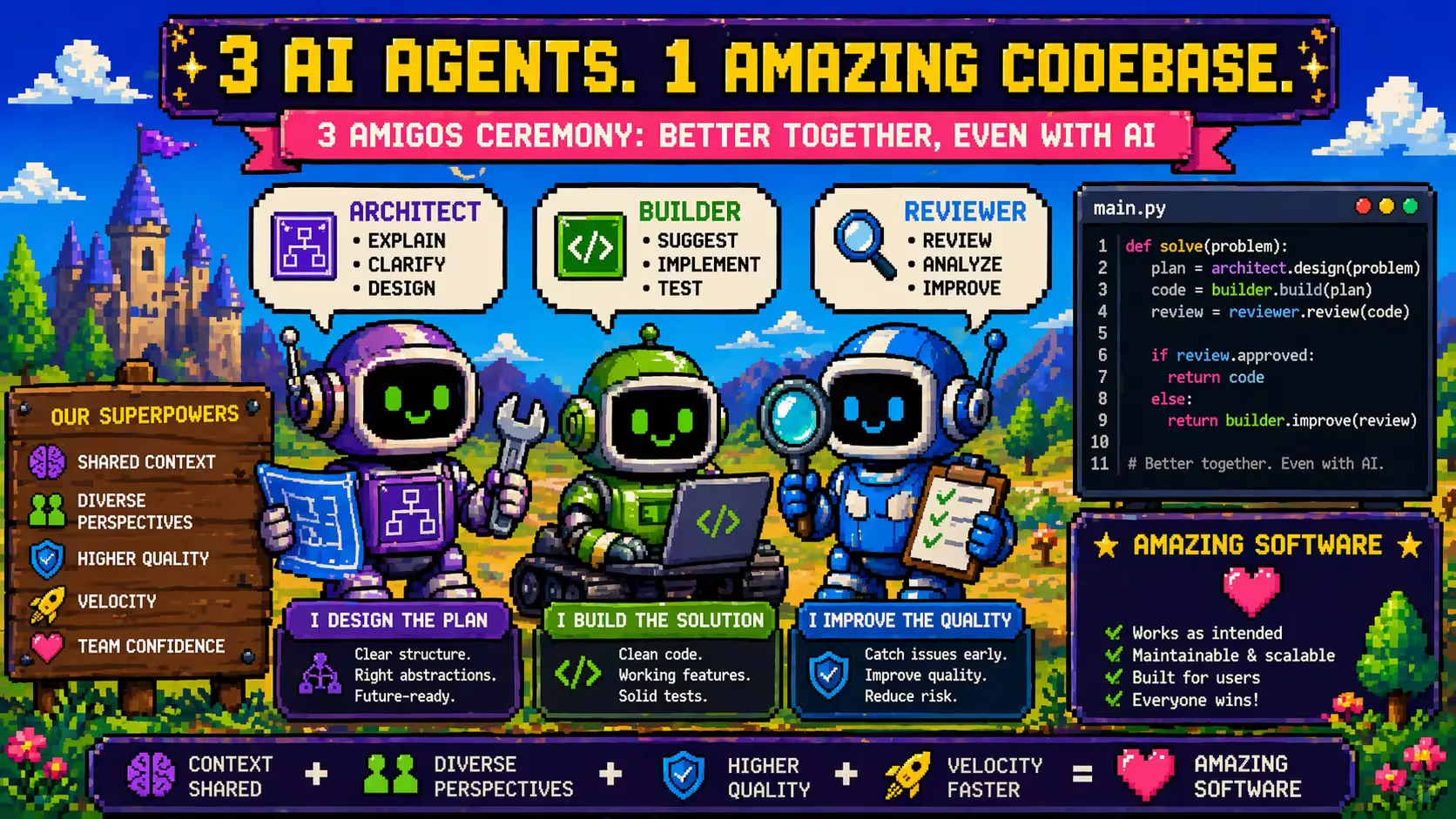

The agent 3 amigos

Here’s where it gets interesting. Look at what people building these systems are converging on:

Steve Yegge’s Gas City essay argues you never run a single agent in production. Any agent can make a bad call, so you deploy fleets that watch each other. The developer becomes a shepherd, tending flocks rather than typing. The irony of herding cats now in real life.

Russell Aaron shipped a tighter version of the same idea as installable Claude Code skills. His three-man-team framework gives you Arch, Bob and Richard: architect plans, builder executes, reviewer critiques. The landing page line: “The solution isn’t a better prompt. It’s a process.” That’s the whole argument in nine words.

None of these people coordinated this thinking. These are different solutions that look at the same problem from different angles. The April 2026 Thoughtworks Technology Radar Vol 34 draws the line for us, naming small “team of coding agents” as a recognised practice for assessment and treating large “coding agent swarms” as a separate, more cautious category.

It’s the three amigos, this time in agents:

Architect agent: plans

Breaks the work into steps, identifies files to touch, flags risks. Output is something a human or another agent can reason about.

Builder agent: executes

Takes the plan and writes the code.

Reviewer agent: critiques

Reads the diff, runs the tests, checks against standards, flags drift from the plan. Its the adversarial reviewer.

The reviewer problem

Can we fully trust an automated reviewer agent? Conversely, can we fully trust a human reviewer?

Hand the reviewer job entirely to an agent and you’ve built the complacency trap. Two agents agreeing and calling it a day is the most confident wrong signal you can ship to production. The Amazon outage, the event processing lambda I wrote about in Part 1, and the access control gap from Part 4 would all have sailed through agent on agent review.

Hand it entirely to a human and you’re perpetuating the bottleneck. One engineer reading a 500 line PR and pretending they caught everything is a rubber stamp with extra steps.

The reviewer role has to split so humans can focus on what cannot be automated in a predictable fashion:

Agents review for the things agents are good at

Style, conventions, standards enforcement, test coverage, obvious security patterns, drift from the architect’s plan. All the boilerplate and repeatable stuff. Thoughtworks Radar 34 has a name for this layer: “feedback sensors for coding agents” which are deterministic quality gates wired into the agent workflow so failures trigger correction before a human reviewer ever sees the diff.

Humans review for the things humans are good at

Intent, fit, product sense, the subtle architectural call, the “this is locally correct and globally wrong”. The adversarial human imagination (tribal knowledge?) about what could go wrong that no checklist fully captures.

This boundary has to be explicitly written down. If you leave it implicit, humans drift toward rubber stamping and agents drift toward owning decisions they aren’t qualified to make. In our setup, the split lives in the review template. The agent posts a structured summary with standards checks on one side and open questions flagged for the human on the other. The human reviewer reads the flagged questions and the architectural shape, not the diff line by line.

Two sets of amigos at once

The human 3 amigos and the agent 3 amigos run at the same time on the same work. The human 3 amigos set the direction and constraints and the agent 3 amigos execute inside those boundaries. When it works, the engineer spends most of the day steering and herding and very little of it typing.

What to do on Monday morning

- Name the three human roles on your next piece of work. Redefine and relaunch the 3 amigos ceremony. Ignore current job titles and empower the people most suitable for each role. If the same person is wearing two hats, write it down. If there are hats with no heads fix that before the agent starts generating code.

- Split your review template in two. One half for the reviewer agent: standards, conventions, plan adherence. One half for the human reviewer: intent, fit, architectural alignment.

- Make the architect step visible. Before any non trivial agent run, produce a plan the human can review in sixty seconds. If the agent is writing code before a human has seen the plan, you’ve skipped the most important of the 3 amigos.

- Encode your standards where the agent will read them. CLAUDE.md, AGENTS.md, whichever file your tool reads. Watch the size though, the bloat has bitten me a few times already and I’m still early on this journey. Enforce everything in CI gates.

The original 3 amigos worked because three perspectives catch what one or two miss. What’s changed is agents can do the heavy lifting with human coordination.

I’m still working this one out. If you’ve found a cleaner split between human and agent review, or a different shape of the 3 amigos that’s working in your team, I’d like to hear it.